In the last four months, the federal government has released a series of AI-related documents, strategies, proposed rules, and requests for information that will influence how artificial intelligence (AI) will be used in and around public health. This blog post breaks down the key documents, identifies the most substantive elements, and considers implications for state and local public health.

In the last four months, the federal government has released a series of AI-related documents, strategies, proposed rules, and requests for information that will influence how artificial intelligence (AI) will be used in and around public health. This blog post breaks down the key documents, identifies the most substantive elements, and considers implications for state and local public health.

The Policy Cascade

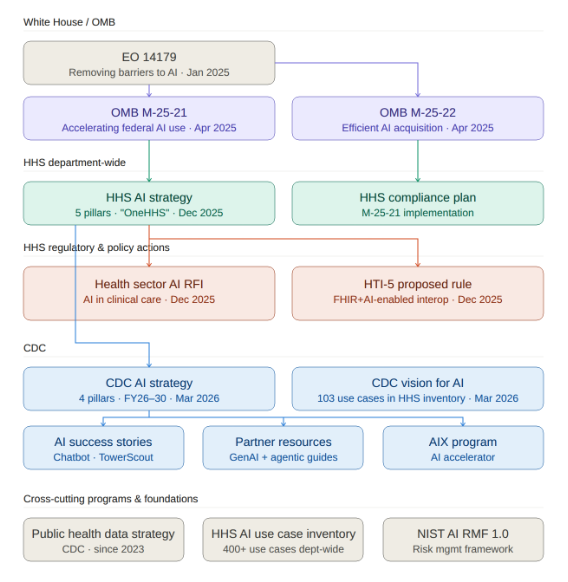

The key policy and regulatory documents flow from the top down:

At the top is Executive Order 14179 (“Removing Barriers to American Leadership in Artificial Intelligence”), signed in January 2025, which indicated that the federal government wants to accelerate AI adoption and clear away regulatory obstacles. That EO directed OMB to issue implementation guidance, which arrived in April 2025 as M-25-21 (accelerating federal AI use) and M-25-22 (streamlining AI procurement). These memos required every federal agency to develop AI strategies, appoint Chief AI Officers, and create governance structures. Also stemming from the EO, a Request for Information (RFI) on the Development of an AI Action Plan was released in February 2025, followed by the publication of America’s AI Action Plan.

HHS released its AI Strategy in December 2025 and an accompanying Compliance Plan. In the same month, HHS also released an RFI on AI in clinical care and the HTI-5 proposed rule, which contains a number of AI provisions. (Separately that same month, FDA announced that it had deployed agentic AI tools for its workforce.)

The CDC AI Strategy was published in March 2026 as part of a new CDC AI website. And underpinning all of the HHS and CDC AI initiatives are cross-cutting foundations like CDC’s Public Health Data Strategy (from 2023), the HHS AI Use Case Inventory, and NIST’s AI Risk Management Framework (from 2024).

The HHS AI Strategy

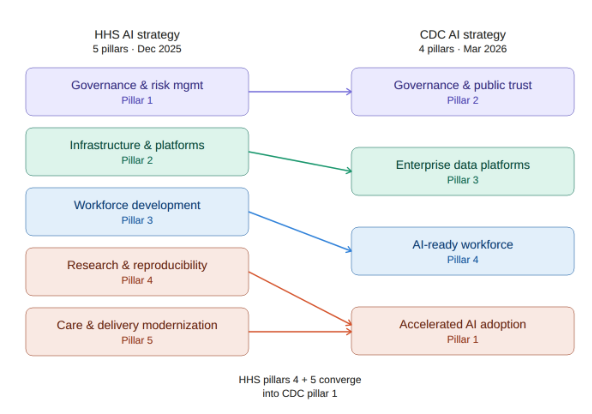

The HHS AI Strategy is organized around five pillars: governance and risk management, infrastructure and platforms, workforce development, research and reproducibility, and care and public health delivery modernization. It’s a 21-page document that reads mostly as high-level vision: broad commitments to “manage risk,” “ensure ethics,” and develop an AI capable workforce. It lacks specific technical standards or immediate funding vehicles, and functions more as a mission statement than an implementation plan.

The strategy is more substantive in describing how HHS wants to buy and manage technology. It introduces a “OneHHS” model, consolidating data, infrastructure, and AI tools across agencies (FDA, CDC, CMS, NIH) rather than letting every agency buy its own disjointed tools. Instead of agency-specific solutions, HHS wants centralized “infrastructure and platforms.”

In practice, this may favor large contractors who can build cross-agency platforms. The strategy does mention establishing “testbed environments” and sharing models, which could leave room for smaller, more specialized vendors to participate and innovate.

The Health Sector AI RFI

The Health Sector AI RFI (“Accelerating the Adoption and Use of Artificial Intelligence as Part of Clinical Care”), published the same week as the HHS AI Strategy, asked the public how HHS should use its regulatory, reimbursement, and R&D influence to accelerate AI adoption in clinical care. It drew thousands of comments from hospitals, medical societies, health plans, and tech companies. The major themes in the comments centered on topics such as the need for a unified federal regulatory framework (rather than a patchwork of state laws), new reimbursement pathways for AI-assisted services, and transparency requirements for AI used in prior authorization. These are primarily clinical and payer concerns; the RFI does not address public health, immunization, or population-level surveillance. As we noted in our coverage of the 2026 ASTP Annual Meeting, the RFI comment period and the broader AI policy push represented an opportunity for public health to provide feedback from its unique perspective, but the absence of public health voices in these conversations remains a pattern. Of all the comments, we could not find any from public health related organizations.

The CDC AI Website and Strategy

CDC launched its AI website in March, with four sections: a vision statement, the AI strategy, success stories, and resources for public health partners. The site showcases CDC’s enterprise generative AI chatbot (“ChatCDC,” built on Azure OpenAI / Microsoft Copilot), which was noteworthy when CDC adopted it in 2024 (as the first federal agency to deploy an enterprise-wide GenAI chatbot), but is now essentially standard practice for organizations with M365/Copilot Studio subscriptions. The other highlighted success story is TowerScout, a computer vision tool for detecting cooling towers during Legionnaires’ disease investigations. TowerScout is an interesting application, though it’s a pre-GenAI-era machine learning project.

The CDC AI strategy is organized around four pillars. Here’s how they compare to the parent HHS strategy:

HHS keeps research and delivery modernization as separate pillars, while CDC has a single “Accelerated AI adoption” pillar (Pillar #1). This is where CDC calls for deploying “agentic AI systems” for “adaptive, goal-driven automation.”

The CDC strategy also extends outward in ways the HHS strategy does not. CDC’s Pillar 2 (governance) includes publishing resources for state, tribal, local, and territorial (STLT) public health agencies. Pillar 4 (workforce) calls for promoting AI workforce capability among STLT partners. The Resources for Public Health Partners page has two guidance documents: considerations for generative AI in public health and considerations for agentic research in public health.

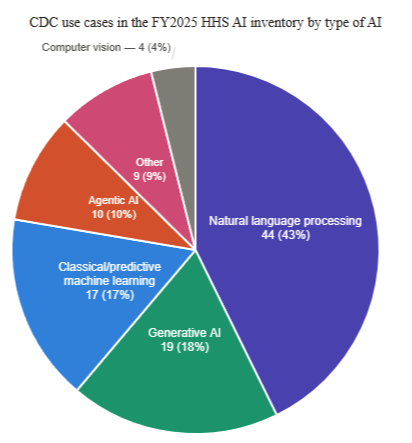

The HHS AI Use Case Inventory

One of the most useful resources on the CDC AI site is actually a link off the site. The HHS AI Use Case Inventory is a downloadable CSV file containing over 400 AI use cases across HHS (for FY2025), about 100 of which are CDC’s. This lists actual projects, with vendors named, deployment stages identified, and AI techniques categorized.

For immunization programs and Immunization Information System (IIS), there are several use cases worth tracking:

- Immunization Information Systems Guidance Navigation (Pre-deployment, GenAI): Uses Azure OpenAI to let staff query and update IIS guidance documents.

- Databricks Genie for Routine Immunization Data Insights (Pilot, GenAI): LLM-based natural language querying over immunization records held by CDC.

- VTrckS Conversational AI Pilot (Pilot, GenAI): An AI chat interface over VTrckS data, replacing SAP dashboards.

- Automating Influenza Vaccine Virus Data Processing (Pilot, GenAI): Uses GenAI to extract candidate vaccine virus data from PDFs and websites, replacing manual R scripts.

- Reviewing Global Influenza Vaccine Literature (Pilot, Natural language processing): Automated literature screening for influenza vaccine access research.

- AI-Powered Web Scanner for Rabies-Related News (Pilot, Agentic AI): Surveillance for rabies outbreaks to inform traveler vaccine decisions.

Most of these are in pilot or pre-deployment stages, but they suggest the direction CDC is heading: using AI to make large datasets queryable in natural language, automate document-heavy workflows, and monitor real-world signals for public health action.

HTI-5 and AI

Additional AI policy appears in the HTI-5 proposed rule (see our blog post on HTI-5). HTI-5 is primarily a deregulatory action: The Assistant Secretary for Technology Policy (ASTP, formerly ONC) is proposing to remove much of the existing ONC Health IT Certification Program criteria in order to focus primarily on FHIR, as well as to help set the stage for increased adoption of AI. AI-related highlights include:

- AI model card requirements would be eliminated. Under HTI-1, health IT developers were required to provide detailed “model cards” for predictive decision support interventions, including AI-based tools. These cards were like a nutrition label showing how the AI was trained, what data it used, and what its known risks are. HTI-5 proposes to remove these transparency requirements entirely. It is worth noting the tension here: the HHS AI Strategy emphasizes governance and public trust while ASTP is simultaneously proposing to roll back transparency requirements for AI tools used in clinical settings (in the spirit of rapid adoption).

- Information blocking definitions would be updated to include AI. HTI-5 proposes to revise the definitions of “access” and “use” to explicitly include automated and AI-driven processes. This means that blocking an AI system’s access to electronic health information could be treated the same as blocking a human’s access.

- The rule explicitly names Model Context Protocol (MCP), an AI interoperability standard. HTI-5 describes resetting the Certification Program to allow “more creative artificial intelligence (AI)-enabled interoperability solutions that combine FHIR with newer standards to emerge, such as Model Context Protocol (MCP) which ‘standardizes how applications provide context to Large Language Models (LLMs).'”

The comment period for HTI-5 closed February 27, 2026, but no final rule has been issued yet.

Implications for State and Local Public Health

The federal government is building the policy framework for AI in public health, but the path from framework to implementation is not entirely clear. Between the OMB memos, the HHS strategy, the CDC strategy, and HTI-5, there is no shortage of directives, pillars, and strategic objectives. CDC’s strategy calls for promoting AI capability at the STLT level and publishing resources to help partners adopt. What is absent from all of these documents is funding. None of the strategy documents identify specific funding vehicles, grant programs, or resource commitments to support AI adoption at the state and local level. For our partners in public health that are already operating with constrained budgets, a policy framework without funding does not go very far.